The chamber always looks smaller than I would have expected. I start by calibrating my microphone with a small device made for this exact model. I measure the volume from my test input, and the software automatically compensates input gain. This microphone is a near perfect test microphone. It has a full range frequency response. Any correction needed would be less than +/- 1dB at any point in the frequency spectrum. I place the radio under test in the center of the suspended floor and set the microphone in its stand, aimed at the center of the output speaker, one inch away. I shut and lock the door. It’s so quite in this chamber, I can hear the blood pulse through my ears. It’s an-echoic, of course. I’m going to measure the audio frequency response of our new digital tier of radios. Motorola has once again innovated in the radio business and helped to free more space in the radio frequency spectrum by moving communications to a narrow bandwidth digital solution. I start at 10% of maximum volume and eventually work my way up to 100%. By 70% of maximum volume, the Total Harmonic Distortion (THD) is way above specification. It’s off the charts. And it sounds like it too. You can hear the test signal degrade and push the device into new sonic territory. I open the door and run a sweep at maximum volume just to experience the loudness.

As a musician who develops their own recording software, it’s imperative to understand the basics of digital signal processing. Happy accidents are welcome, but if you’re unable to dissect exactly what’s happening—and why—you’ll likely find it difficult to expand on something that you find interesting. It really is only after many iterations of exploration that you find something that truly becomes your own, part of your expression. And so I want to take a moment to discuss something that’s at the center of almost all forms of recorded music.

At many points in the process of making a record, at various points in the signal chain, what you put in is likely not going to match what you get out. It’s inevitable. With an electric guitar, the signal that emanates from a pickup will be corrupted by an amplifier. Once recorded with a microphone placed in front of a speaker, there may be additional modification to this signal from outboard gear via a recording console. Lastly, you may record to magnetic tape, or more likely, use a tape simulation plugin. And of course, you’ll need a limiter to ensure that the final result does not exceed the specifications for the medium that you’re targeting.

Corruption, modification, limiting. Distortion has many names. It’s a non-linear phenomenon that encapsulates any system that is unable to linearly reproduce its input. Guitarists may know it as overdrive, fuzz, or distortion. Recording engineers may know it as compression, or limiting. Mastering engineers may know it as limiting, or saturation. Despite our best engineering efforts to remove distortion from recording equipment, varying the amount of distortion, in essence, controlling the level of distortion applied to various points in the signal chain, is virtually required to satisfy almost all genres of music.

Vocals need to pop, drums need to slam, guitars need to scream, or not. The mix needs to be balanced. Each instrument needs to be able to be distinguished from the rest, and the song just has to… gel.

If not, we would end up with something recorded in the style of classical music. Don’t get me wrong, I enjoy listening to classical music, but a pure sonic representation of a rock n’ roll band would not sound very pleasing. I use the word pleasing because “good” is subjective. Distortion has become a stylistic choice. Distortion on tracks, mixes, and master recordings imparts a critical element to how we perceive and hear music.

So what is distortion?

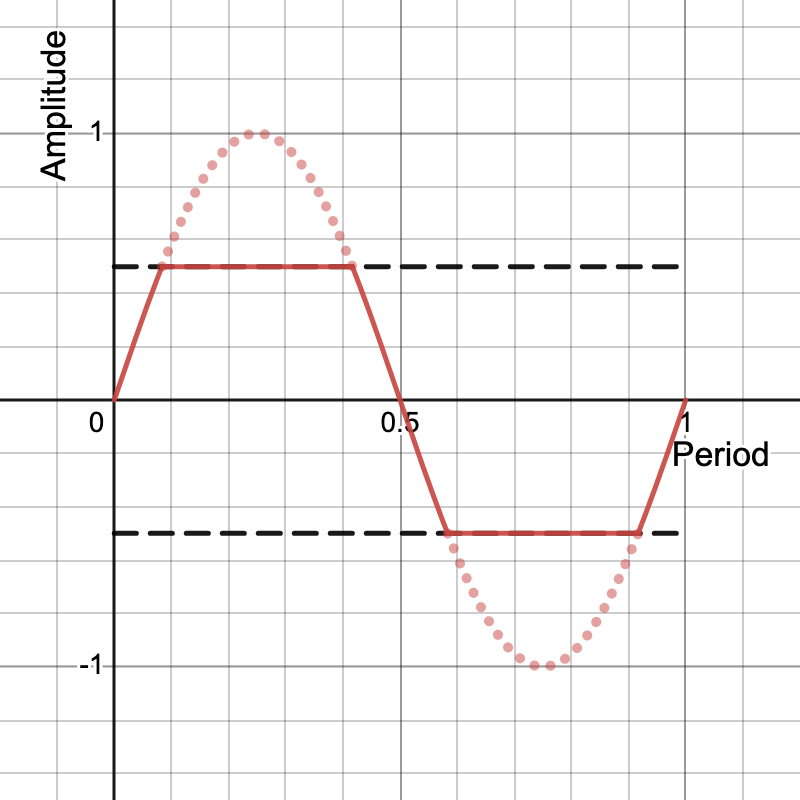

The most basic example is a limiter. A limiter stops an input signal from exceeding a configurable threshold. If we’re dealing with an audio signal that exists between -1 and +1, if we apply a limiter with the threshold set to -0.5, and 0.5, then the input signal would be clipped at -0.5, and 0.5 despite needing to travel lower and higher in amplitude. Additionally, this would be called a hard limiter, since there is no complexity at the point of cutoff—we shift drastically from reproducing the input signal to limiting at -0.5, and 0.5. Here’s what that looks like visually for one period of a sine wave.

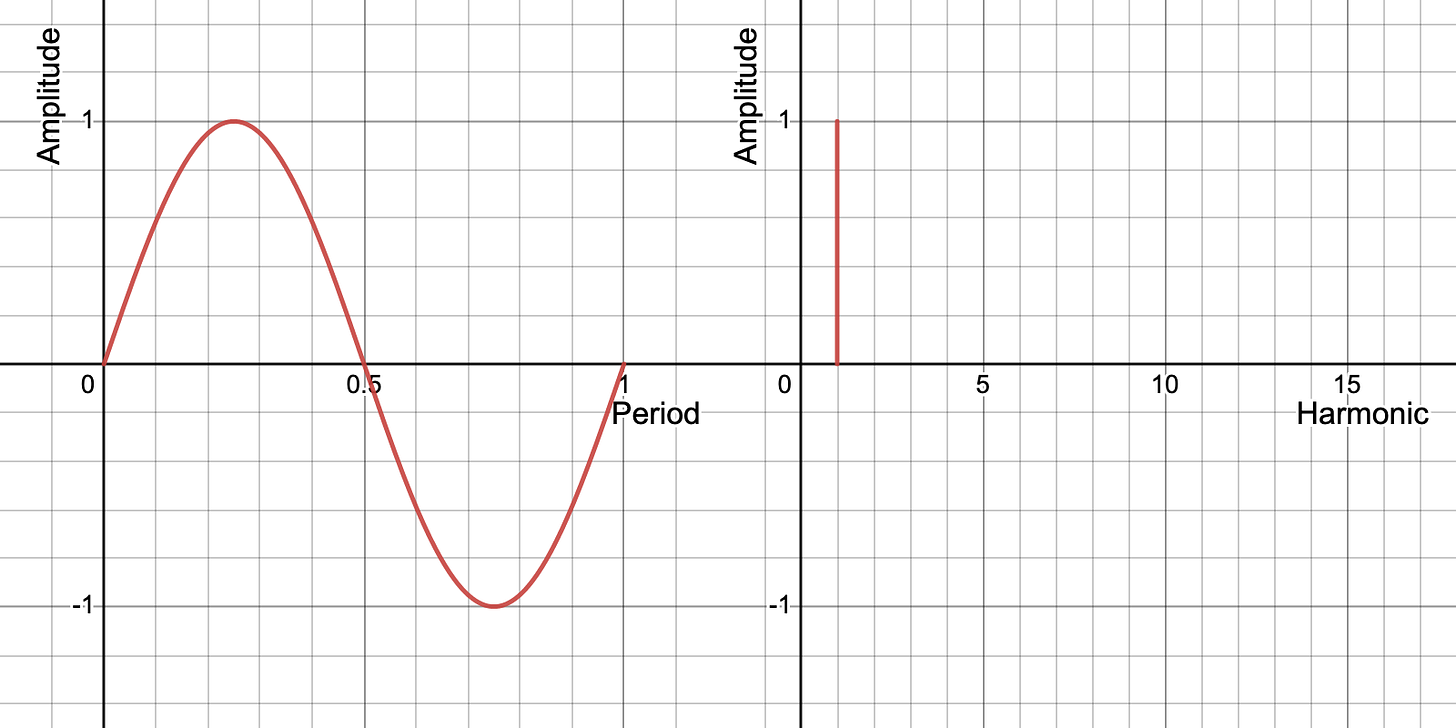

You might notice that this sine wave starts to look more like a square wave. Let’s take a brief moment to look at the harmonic content of sine and square waves.

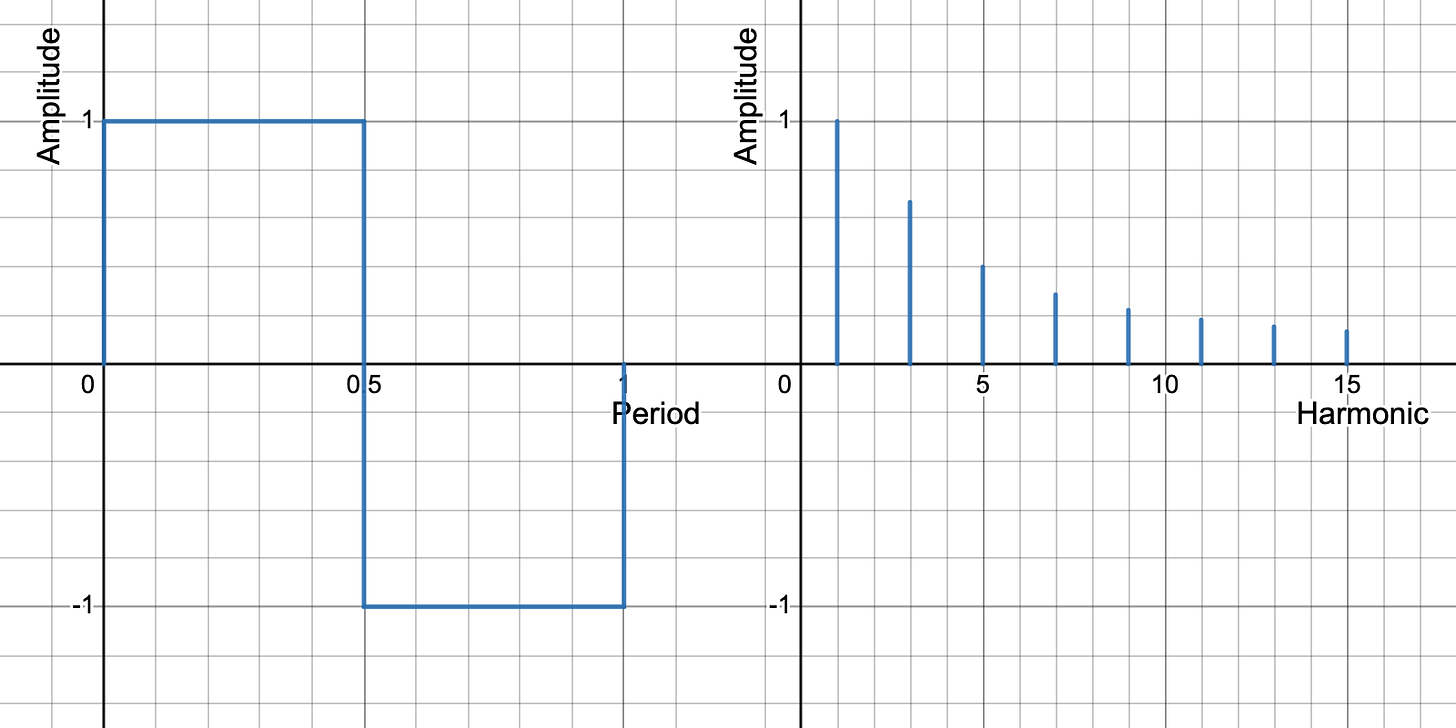

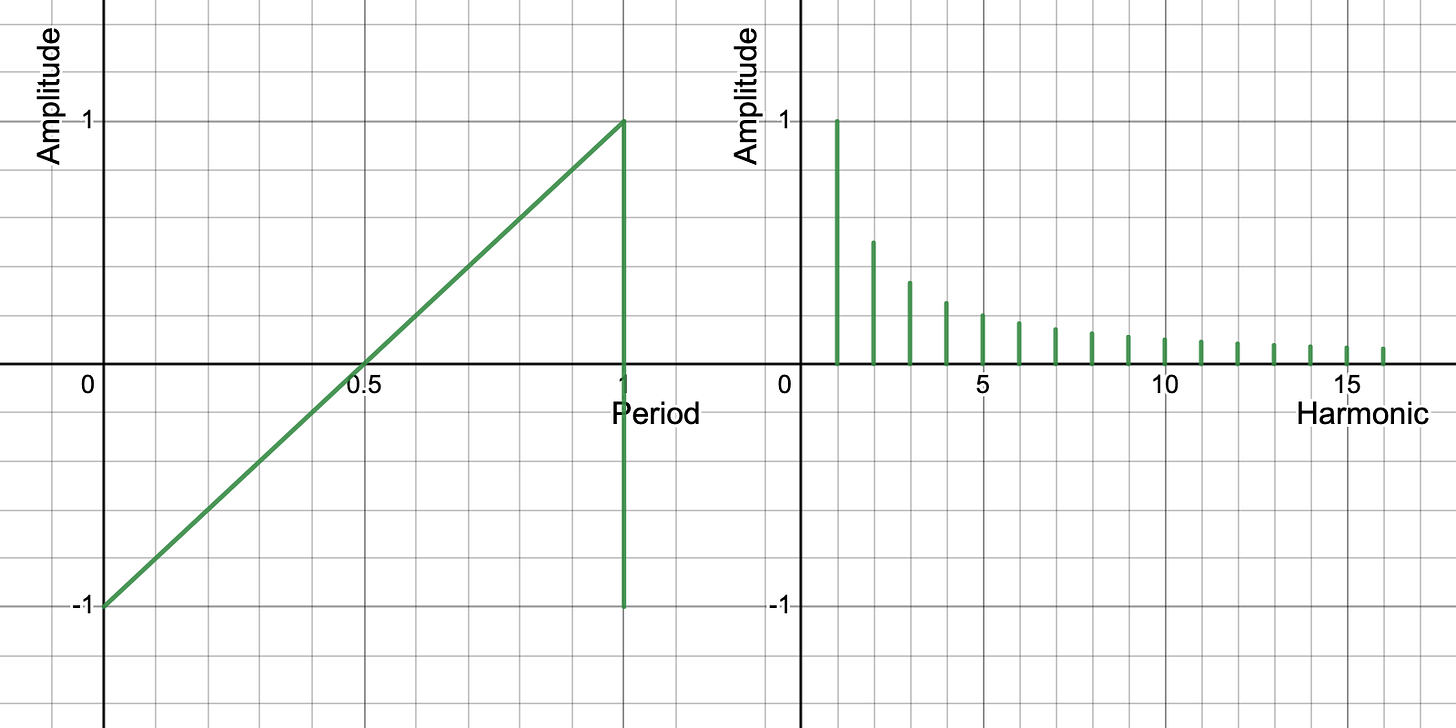

The difference between a sine and square wave is that a square wave has additional harmonic content, while a sine wave is pure in representing only its fundamental frequency. Let’s take one more wave, this time at a sawtooth wave, and see its harmonic content.

It’s slightly different than a square wave. While the square wave is comprised of only odd order harmonics, the sawtooth wave also includes even order harmonics. At this point, you might reason that as we make our input signal look more like a square wave, the level of harmonic content will increase—even or odd. If that’s what you’re thinking, you’re correct! Distortion adds harmonic content to the output signal that was not originally present in the input signal.

I would spend the majority of my time as an audio engineer at Motorola measuring the performance of our audio circuitry under different environmental conditions. Under and over temperature, sustained performance over longer periods of time, with different loading conditions, and varying supply voltage. And I would also evaluate the performance of our microphone and speaker. That was the channel by which we connected with our customers. And an important one, no less. We drafted specifications, and worked hard to achieve them. I would eventually come up with a dynamic spreadsheet to capture all of the different dimensions that I was measuring. Though we had to meet all specifications under normal conditions, we still allowed an extra 20-30% in our gain staging to go beyond specification with respect to Total Harmonic Distortion (THD). It wasn’t until I found myself at a customer site that I realized why we did this. When you’re on the job, whether you’re in construction, or public safety, and your environment is outdoors, and it’s loud, and you need to hear an important message over the air, you need that message to pierce whatever ambient noise might be happening at the same time. By distorting incoming audio, the chances of that message cutting through the noise was much higher. The added harmonic content made the sound more dense, and as a result, more audible. I quickly realized that most of our customers were running their radios this way, and it made sense.

What is true for these customers is true for recorded music as well. Distortion can help accentuate an instrument. Or when more subtly applied, it can add an extra dimension to drums, or vocals. There are a lot of options and the results are varied. There’s a reason why in the history of recorded music we have created separate roles for recording, mixing, and mastering engineers. They serve different purposes. If you haven’t already, check out who worked on some of your favorite recordings. If you like a specific genre of music, the chances are high that your favorite recordings were worked on by the same people. That’s not to say they are the only ones who can do it, but there’s something to learn from how they approach their job, and the results they are able to achieve.

We just looked at a hard limiter, and the harmonic content of square and sawtooth waves. I’m sure you asked yourself why the sawtooth wave has even order harmonics while the square wave does not. Visually speaking, when adding even order harmonics, the waveform is no longer symmetrical, or even—the irony. And so you can think of it like this, as we distort an input signal, the ratio of even and odd order harmonics can be controlled by how closely the output resembles a sawtooth or square wave.

The next logical question is then, how do we get our input signal to look like a sawtooth wave? That involves moving away from hard limiting to… soft limiting. Or compression. Or asymmetrical distortion, or fuzz. Any device that modifies an input signal unevenly. There’s a whole world of effects to explore. And at the heart of it all, each results in a different level of even and odd order harmonics in the output signal.

In the physical world, we have devices like transformers, transistors, and vacuum tubes that act as couplers and amplifiers, providing limiting characteristics when overdriven. Additionally, devices like optocouplers, and more complex circuits like voltage followers give compressors their soft limiting characteristics. The good news is that we’re able to replicate these characteristics in the digital domain, and achieve similar results with digital signal processing. The possibilities are endless, and we’re still only at the beginning of the next phase of recorded music.

So stop reading. Open up your DAW (Digital Audio Workstation). And throw a spectrum analyzer on one of your tracks and compare the before and after effect of your favorite distortion/fuzz/overdrive/compressor/limiter/tape saturation plugin. Now you have a better idea of what’s happening to your music. Just remember, with great knowledge comes great responsibility.

You can find some of the software I use to make music here! https://themusicologygroup.com